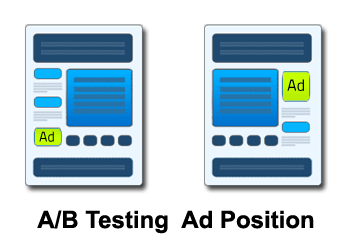

A/B testing has quickly turned into a fundamental method used by marketers to optimize landing pages to improve conversions. Rather than relying on the gut-feel and conventional wisdom, A/B testing lets you see actual statistics-backed results using which you can make appropriate adjustments.

Also known as split testing, A/B testing can also be used to make email marketing campaigns more effective by improving open rates and click rates. Similarly, it can also be used to improve website ad monetization. For web pages, it allows you to modify different page elements, and measure the result of such changes on your conversion rates.

There is quite a bit of information about this topic on the Internet. For this reason, we have elected to take a look at some of the best material that’s already out there. Each of the articles discussed below solves a certain essential piece of the puzzle regarding A/B testing.

This article by Cara Olson, published in Marketingland.com is a good introduction to the topic. It points out that A/B testing is the  simplest, yet most valuable type of testing you can do. Although this article focuses on using the process for email, the same concept can be applied to other areas, such as optimizing landing pages or websites.

simplest, yet most valuable type of testing you can do. Although this article focuses on using the process for email, the same concept can be applied to other areas, such as optimizing landing pages or websites.

Olson points out quite a few elements in the context of an email campaign that can be tested in this manner. This includes timing i.e. when you send out the emails, different product images (or creative), offers and subject lines.

2) Advantages and Disadvantages of A/B Split Testing for Landing Pages

This article is by Tim Ash and can be found on the EM+C blog. He focuses on using A/B testing for landing pages and points out this approach’s drawbacks and advantages.

Ash compares split testing with another popular option, namely multivariate testing. While A/B testing is a simpler and more straightforward method, multivariate testing, on the other hand, is slightly more complicated. With this approach, marketers can test several elements at once as against just two with traditional A/B testing.

For example, on a landing page, an A/B test might involve testing one headline against another. With multivariate testing, on the other hand, you can test not only the headline, but also the images and the call to action, simultaneously.

While A/B testing is simpler to apply, it can slow down the optimization process as compared to multivariate testing because you are only testing on one element at a time which means you may have to conduct many tests over a longer period of time.

Despite these disadvantages, Ash recommends A/B testing as the best way to start out. More experienced marketers, or those with larger budgets may want to include multivariate testing in their arsenal of testing methods.

3) 10 A/B Testing Tools For Small Businesses

This article by Sig Ueland, published on the Practical Ecommerce site, gives some helpful hints for tools that make A/B testing easier.

The tools which he identifies include Google Analytics Content Experiments, which lets you test multiple versions of a single page, each with separate URLs, Unbounce – a tool that makes it easy to build quality landing pages and Genetify – for developers who want to perform A/B tests on a site using JavaScript.

This article makes some solid recommendations for the best A/B testing tools that are currently available.

4) The A/B Test: Inside Technology That’s Changing the Rules of Business

In this article from Wired.com, Brian Christian looks at some of the ways that A/B testing is changing fields such as politics and business. He describes how the Obama team used this technique to launch one of the most successful campaign drives in the history of election campaigns.

In discussing the Obama campaign, one important point brought forward is how the initial personal instincts can be very wrong. For example, it was originally assumed that footage of a rally would be more effective than still photos, yet the opposite turned out to be true. This is the type of outcome that any experienced A/B tester will recognize. A/B testing takes things out of the realm of guesswork and into the realm of data-based decision making.

In this article, Christian also looks out how the online payment platform Wepay used A/B testing to improve its homepage. By testing a variety of homepages, they were able to come up with increasingly effective ones. This is a tried and tested approach, and something that is fundamental to the process of A/B testing.

5) Putting A/B Testing in its Place

This article by Jakob Nielsen is from the Nielsen Norman Group website. It offers a concise look at some of the advantages and disadvantages of A/B testing.

Nielsen points out that A/B testing is a cost-effective approach and provides a clear-cut look at the verifiable behavior of visitors or readers. It is also capable of measuring even slight differences in the performance of the variations, which in turn can be quite significant in the long run.

He also identifies some limitations of A/B testing, such as the fact that it’s best for measuring projects with a single key performance indicator (KPI) such as people opting in to an online newsletter or purchasing something on a retail site.

As Nielsen says, however, there are some things that you can’t effectively measure this way. This includes less tangible benefits such as increasing awareness of a brand or improving a company’s reputation. Another drawback is that A/B testing is only appropriate when you have a design that is already in place.

Nielsen contrasts this with paper prototyping, a method that lets you test various user models even before they are fully operational. He concludes that the best approach is to use paper prototyping in the initial phases of an endeavor and then use A/B testing in the final phase.

6) 7 A/B Blunders That Even Experts Make

Neil Patel, of KISSMetrics, advises anyone who does A/B testing, to be aware of the mistakes that can be made using this methodology by pointing out some of the common blunders made by even the most expert of the users.

One of these mistakes is relying on split tests performed by others without fully understanding the results. The example Patel gives is confusing clicks with conversions. One ad may outperform another by a certain percentage, but you have to pay attention to whether this refers to conversions or merely clicks.

Another mistake is expecting too much from very small changes. While this sometimes occurs, in most cases you have to make drastic changes to see significant results, so it is important to look at the bigger picture.

Patel also makes the astute point that you can make better use of your time by surveying your customers before you do any testing. Simply testing random pages, ads or emails before consulting with your customer base is a common error.

This article provides some good insights into what not to do when running a split test.

7) Simple A/B Testing in WordPress With Google Analytics Site Experiments

In this article, Stu Miller discusses how to do A/B testing on a WordPress blog using Google Analytics. He explains why he thinks it’s preferable to use Google Analytics Site Experiments rather than a WordPress plugin such as Max A/B that can also be used for split testing. As he explains, if you already use Google Analytics there’s no need to complicate things by installing a plugin.

Most webmasters are familiar with Google Analytics, but fewer people know about Google Site Experiments, which is especially designed for testing. Miller explains how to use this tool with a WordPress blog.

8) 7 A/B Testing Resources For Startups

In this article from Mashable.com (originally published on Dyn.com), Jolie O’Dell advises startups on some of the most useful tools for A/B testing.

What’s especially useful about this article is that O’Dell suggests different resources for people with different levels of technical expertise. While there are recommendations that require no coding such as Optimizely, Performable and Unbounce, there are others which require a basic technical expertise to pull off split tests like Google’s GWO and still others which require you to be an experienced coder like the Genetify and Vanity tools.

This article also contains introductory videos to accompany each of these recommendations.

9) 11 Common A/B Testing Myths

In one of the above articles, we talked about common mistakes made by people who do split testing. In this article by Ginny Soskey, we learn about some A/B testing myths.

You probably don’t believe in all these myths, but there’s a good chance you are susceptible to at least a couple of them. One common misconception is that your gut feeling is more reliable than split testing. As we saw in the above example from the Obama campaign, this is often not the case.

Another myth takes us to the opposite extreme -the notion that we must split test every single variable. This can cause us to waste valuable time testing insignificant differences, such as headlines where minor changes have been made.

Among the other myths uncovered in this article is an exceptionally important one to note – the idea that you can rely on testing done by other businesses or websites. While it can be tempting to save yourself the work of doing your own testing, you have to realize that every situation is a little different and hence the result of tests on one website may not be relevant to the tests of a different website even though the websites might be in the same nice.

This article effectively warns us about A/B testing myths that can be costly to believe in.

So there we have it – 9 awesome articles about A/B Testing. Did I miss any important information or a common issue regarding A/B Testing? Feel free to post a comment!

Ankit is a co-founder @ AdPushup (a tool which helps online publishers optimize ad revenues) and loves online marketing & growth hacking.

![28 Best Supply Side Platforms (SSP) for Publishers in 2024 [The Complete List] Supply Side Platforms](https://www.adpushup.com/blog/wp-content/uploads/2022/05/undraw_chore_list_re_2lq8-270x180.png)