Bot traffic or non-human traffic is yet another addition to the entire ad fraud nexus.

Publishers often complain about their websites, ads, and campaigns not performing as expected. They feel ‘non-human traffic’ also known as ‘bot traffic’ is one of the reasons.

About 78% publishers report bot traffic on their sites, yet only 38.4% purchase traffic.

Despite publishers knowing the reason, the problem still continues to persist as they don’t know why and how bot traffic has been plaguing their efforts. So at the end, what does this unawareness lead to?

The answer is ad revenue loss and website quality decline.

This is why we have compiled a blog post that answers some essential questions related to bot traffic such as what it is, what purpose it serves, and how to block/remove it.

What is Bot Traffic?

Bot traffic simply means non-human visitors are coming to your website. Whether yours is a big, popular website or a new one, a certain percentage of bots will pay you a visit at some point of time.

Traffic bots or web robots are automated to visit premium websites and appear as targetable humans (audience). Some bots perform repetitive tasks like copying, ad clicking, posting comments, or any activity that can be included in malvertising.

Data has it that almost 29% of website traffic is bot traffic. This also means 29% budget is being spent on processing artificial pageviews/ad clicks eventually leading to high (poor) bounce rate (more coming up on this).

An acceptable bounce rate of a website ranges from 45-65%. Normally, such a figure would appear too unimpressive. However, publishers, advertisers, and marketers have become accustomed to this range of bounce rate. Why?

This is because website owners know every bit of their traffic cannot be real. Holistically, almost 50% of web traffic is bot traffic. In 2016, bot traffic accounted for 51.8% web traffic.

These are staggering numbers and are reflective of the penetration of bot traffic.

How can bot traffic hurt analytics?

Unauthorized bot traffic can negatively impact analytics metrics such as bounce rate, conversions, page views, session duration, and geolocation of users.

These deviations can create a lot of frustration for the publisher or sit owner. It’s tough to measure real performance of a site that’s being flooded with bot activity.

Six Types of Bots to Watch Out From

1. Click Bots Traffic

These bots make fraudulent ad clicks, therefore used for click spamming. This is the most threatening bot type for web publishers especially if you follow the PPC model. Consequently, the analytics data gets skewed and budget gets eroded.

2. Download Bots Traffic

These bots also tamper with the analytics-generated user engagement data. However, instead of ad click count, they add to fake download count. In case where a free ebook download is your end conversion, these bots are likely to mess up your conversion data.

Spam Bots Traffic

This is the most common bot type which disrupts user engagement with distribution of unwarranted content which are spam comments, phishing emails, ads, unusual website redirects, negative SEO against competitors, etc.

Spy Bots

Perhaps the most despised type, these bots mine individual or business data. They steal information like email addresses from websites, forums, chat-rooms, and others.

Scraper Bots

These bots visit a website with malicious intent一stealing your content. They are made by third-party scrapers who are employed by competitors in order to steal content, product catalog, and prices. The stolen content is then repurposed to publish elsewhere.

Imposter Bots

These bots appear as genuine visitors who intent to bypass online security measures. It’s mostly these bots who are responsible for attacks like distributed denial of service. They’re also the ones who inject spyware on your site or appear as fake search engines.

AdPushup helps publisher increase their ad revenue. We achieve this by our machine-learning algorithm, automated A/B testing, header bidding, innovative ad formats and in-house ad operations expertise. Feel free to reach out and ask our experts.

Good vs. Bad Bots

It’s important to remember that not all bots are bad.

The good ones are created to perform operational tasks like old data scraping, content hygiene, data capturing, etc. Some good bots are backlink checker bots, monitoring bots, social network bots, feedfetcher bots, search engine crawler bots. Good bots are necessary for users to have a fruitful experience of browsing the Internet.

Bad bots, on the other hand, as stated before perform all spammy and fraudulent activities that result in losses to publishers and advertisers, both.

Here’s an infographic on how good bots and bad bots are dissimilar in nature.

How to Identify Bot Traffic?

The bad news is that bad bots are getting smarter. According to the Bad Bot Report 2020 that was released by Imperva, bots comprised almost 40% of Internet traffic, out of which bad bots took the larger chunk of that traffic.

Bot traffic is hitting websites every hour. Even while you’re here reading about it. In the beginning of this blog, we mentioned that many publishers fail to understand why and how bot traffic affects their efforts. Also that they don’t know how to deal with it. So let’s begin with the first question now which is how can publishers identify bot traffic?

#1 Recheck Page Load Speed

You may have conducted this test a week ago. As we know, these test results look a tad different after every short interval. But, the next time when you conduct a page-load speed test and notice a considerable fall (without any major changes that happened to your site), chances are you’ve been hit by bot traffic.

There could be many reasons for a slow loading site. However, in case of detecting bot traffic, checking the page-load speed is the first step. It’s possible that a whole lot of bots are together trying to strain your servers and take them offline.

#2 Keep Tab On Certain Metrics

If you notice a sudden rise in your traffic count and bounce rate at the same time, your site is probably being visited by bot traffic. Here, high traffic count means a high number of bots or high frequency of same bots coming to your site again and again.

And high bounce rate means non-human traffic who visit for no purpose and just leave without exploring more webpages. A suddenly changed session-duration behaviour also indicates bot traffic.

Let’s say your site usually serves long-form content, hence your average session duration lies between two to five minutes. However, if you see an expected dip, bot traffic could be the reason. Alongside these, there are also other common metrics you should keep looking at.

#3 Verify Traffic Sources And IP Address

Not just metrics, even some data sources can act as buzzers for bot traffic. Regular and high number of visits from the same IP addresses emphasize the fact you’re getting bot traffic. Tools like Deep Log Analyzer can help dig into endless raw server logs and blacklist the offending IPs.

Odd traffic sources are next. Suppose most of your traffic comes from US region and Asian countries. A sudden addition in traffic coming via Arab (non-english) countries may be one of the indications.

All of this can be checked using website analytics tools like Google Analytics. If you’re new to GA, it’s advised to get acquainted with the platform first, and then move ahead with understanding the use cases with bot traffic identification.

#4 Test For Content Duplication

Your content is the heart of your website. And with the invasion of bots, it might be at risk. To detect bot traffic, keep checking for duplicate content to ensure no scraper bots have visited your site and stolen from you.

Tools/platforms like SiteLiner, Duplichecker, CopyScape are handy to use and find if your content is repurposed and used elsewhere.

#5 Server performance:

Slowdown in server performance should be reviewed from the bot traffic perspective. If you’re facing downtime, there’s a good chance your server has received multiple bot hits within a short period of time. But traffic often result in reduction in server performance, which can directly impact user experience, site revenue, and your business.

#6. Suspicious IPs/Geo-Locations:

Sometimes, geo-locations also help webmaster identify bot traffic. For example, If you own a local website that caters to regional events, then an increase in activity from a remote location that you don’t serve to can be suspicious enough to look for bot activities.

#7. Language sources:

Often, language can indicate presence of bot traffic on your site. For instance, If your website language and target audience is English, and you see hits from different languages, this can indicate bot activity on your site. As a webmaster, you should try keeping a tab on these areas to detect and block bot traffic in real-time.

How to Stop Bot Traffic?

Detecting bot traffic once, immediately calls for stopping bot traffic once and for all. Bots are like viruses hitting your website, skewing your set systems, stealing your data, and more. But thankfully, there are methods that can help you shield against it. Here you go:

- Legitimate Arbitrage: Buy traffic only from known sources. To ensure purchased yet safe traffic, many publishers practice traffic arbitrage to ensure high yielding PPC/CPM based campaigns.

- Use Robots.txt: Place robots.txt to keep bad bots away from crawling your web pages. Publishers might also want to ensure the crawler settings are as required to prevent troubles in AdSense ads.

- JavaScript for Alerts: Set JavaScript to alert about bots. Having contextual JS in place acts as a buzzer whenever it sees a bot or similar element entering a website.

- DDOS: Execute distributed denial of service (DDOS). Publishers having a list of offensive IP addresses leverage DDOS protection to deny those visit requests on their website.

- Use Type-Challenge Response Tests: Add CAPTCHA on sign-up or download forms. Many publishers and premium websites place CAPTCHA to prevent download or spam bots.

- Scrutinise Log Files: Examine server error log files. As bots try to overrun servers, thoroughly examining the server error logs helps find and fix website errors caused by bots.

Looking for more demand on your inventory, we can help with our partnerships with 20+ global demand partners. Learn more.

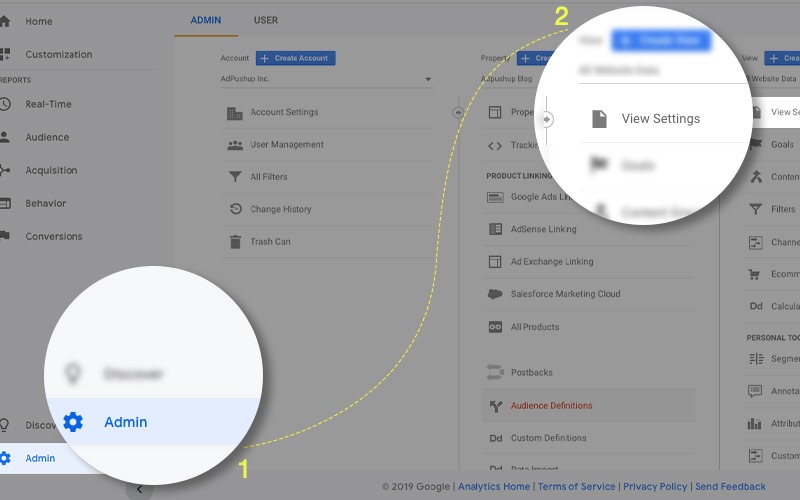

How to Detect Bot Traffic In Google Analytics

Time needed: 3 minutes.

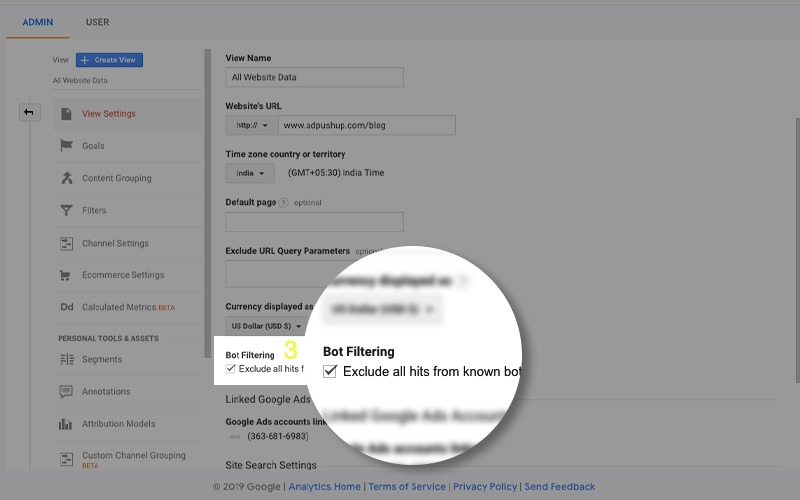

- Admin Panel

Visit Google Analytics Admin Panel and navigate to View Settings:

- Bot Filtering

In ‘View Settings’, scroll downward to spot Bot Filtering checkbox.

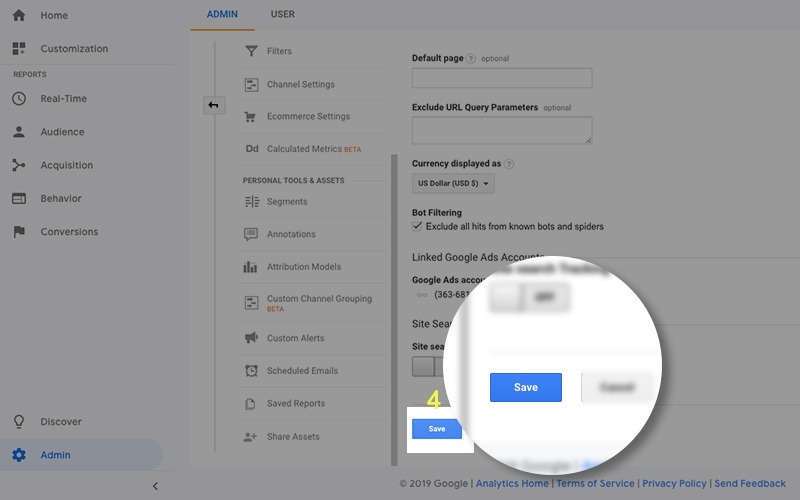

- Check & Save

Apply ‘Check’ in the checkbox, if unchecked. Hit Save.

This filtration of bot traffic out from your Google Analytics account ensures all types of recognized bots (the ones mentioned above) steer clear of your data. However, the method may not be able to debar unidentified or a new type of bots.

Can Bot Traffic Be Ignored?

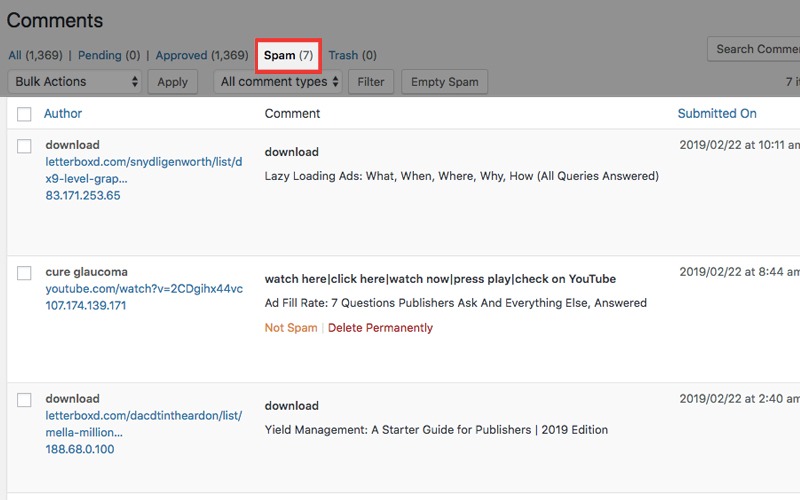

We also encounter bad bots on our website from time to time. Below is an example of spam comments that we received on our blog:

The treatment to this is ‘mark these comments as spam’ and we could have done the same. But since we are experts on the matter, we used this opportunity to explain why we chose not to ignore this bot activity. And why publishers shouldn’t either.

Publishers should start caring about bot traffic on their website because their:

- site and ads are also being hurt by ad fraud disguised as bot traffic

- precious data/analytics might be getting skewed

- website load time and overall performance might be deteriorating

- website is getting vulnerable to botnets, DDOS attacks, and bad SEO

- CPC and revenue are getting severely affected by fake clicks

In Closing:

Surprisingly, only 5% publishers work with a dedicated fraud-patrol professional. This means, publishers are getting affected by ad fraud methods (bot traffic being one), but they’re doing pretty much nothing about it.

We encourage publishers to consistently check for bot traffic on their website as it could lead to revenue loss and bad user experience.

Frequently Asked Questions:

Bot traffic simply means non-human visitors are coming to your website.

Whether yours is a big, popular website or a new one, a certain percentage of bots will pay you a visit at some point of time.

Click Bots

Download Bots

Spam Bots

Spy Bots

Imposter Bots

Scraper Bots

Recheck Page Load Speed

Keep Tab On Certain Metrics

Verify Traffic Sources And IP Address

Test For Content Duplication

Shubham is a digital marketer with rich experience working in the advertisement technology industry. He has vast experience in the programmatic industry, driving business strategy and scaling functions including but not limited to growth and marketing, Operations, process optimization, and Sales.

1 Comment

thank you very much for the content now I am understanding how to identify the bot traffic …