There is no rulebook to answer which ad practices drive the maximum revenue. So, in order to test, publishers switch between ad types or sizes to see which ones get more impressions. Or, they simply go with their gut. The result? They never get empirical data on what actually works and what doesn’t.

Ad testing is a form of A/B testing, i.e., the comparison of performance between two variants. But that’s not limited to just the ad type (for the publisher), or, for example, the color of the CTA button (for the advertiser). In ad tech, the scope of testing has far expanded from plain testing. Testing conventions have changed and publishers now have granular data to make mindful decisions.

Different types of testing, on different elements and parts of a website, empower publishers with the data they need to grow. Each test has a different aim, but a common goal一to increase ad revenue. For example, an A/B test between two ad sizes might aim to achieve more ad impressions. While an A/B test between static and dynamic ad types may target better CTR.

There are many testing ideas which publishers can execute to find out the best results for themselves. But whatever the case, the thumb rule is that publishers have to test. Let’s move along and discuss some ad testing ideas which may help publishers get started.

1. Ad Format Testing

What to test: Which ad type/format gets better CTR.

How to test: Once you are sure which page and ad format/type you want to experiment on, start by creating variations. For instance, if your page has a display ad placed in the sidebar, try putting a variation of a text ad on the same spot to see if it makes a difference on click through rates.

If you are new to testing, it is advisable to experiment on a page with relatively little traffic, that way you can get the insight you need to make a decision without disrupting anything. For example, a month old blog is a better option compared to an older, high-traffic website that is already generating revenue.

2. Ad Size Testing

What to test: Which ad size/dimension gets the most impressions.

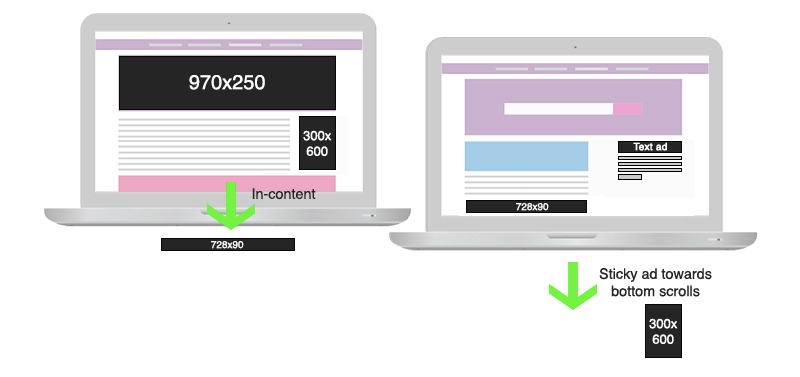

How to test: There are certain ad sizes that are proven to get good results, like the leaderboard (728×90) and small rectangle (300×250). However, depending on the website layout, the performance of these sizes may vary. For example, a neat website with more emphasis on white spacing should compliment a bigger ad size like 970×250, just below the navigation. On the other hand, for a website with more number of click points and attractions, a 729×90 placed above or between the content might work.

Testing with ad sizes is highly dependent on the website layout. The present spacing on your website should give you a visual understanding of what ad size can fit where without hampering the UX.

3. Ad Placement Testing

What to test: Which ad placement/spot gets better CTR.

How to test: As we know, the human eye naturally scans from left to right. Based on this knowledge, content is kept on the left side of the webpage and ads to the right. The downside is that now users can easily predict where the ads exist, and mentally tune them out, which affects CTR. Hence, to test, you can place the ads towards the left, in alignment with the main content. Make sure not to overlap the content.

Another instance is, if you are experimenting with above-the-fold ads, make sure the intent of the page remains above-the-fold. Example, if a site offers a resource to download, ensure that the CTA is to be found above-the-fold. Poor ad placements can be tricky and prove hazardous to the ad revenue. Consider referring to some resource guides which give you a heads up on the do’s and don’ts of ad placements.

4. Ad Layout Testing

What to test: Which ad combinations generate better revenue.

How to test: Testing one ad size vs. another or one ad format vs. another is easy. But what about the whole layout? Using ad layout testing tools, it is possible to create variations of entire ad layouts with specific combinations of different ad formats, sizes, and placements.

Layout testing tool gives you the ability to create variants and compare performance on a website level. This is just like running tests between versions of webpages, or email collecting sign up bars, or exit intent pop ups—but on a bigger scale. The winning ad combination gets majority traffic, driving up the revenue.

5. User Device Testing

What to test: Which ad variation gets better impressions/CTR on which device.

How to test: Usually, it is healthy to keep track of device-wise traffic, owing to changing trends in device usage. In 2018, 52.2% of all website traffic worldwide was generated through mobile. By 2019, 63.4% of mobile phone users will access the Internet from mobile. Tools like Google Analytics help keep track of device/traffic split. Here’s where testing starts. For example, you might receive 10K users from mobile in two weeks and 8K users from desktop in the same duration. This means that your mobile traffic has surpassed your desktop traffic, and hence your mobile ads need closer attention.

Focusing on device-wise ad testing is also related to the website targeting you do. When you target an audience by the device (e.g. mobile), of course you’ll get more traffic from there. Hence, experimenting with ad formats and sizes makes more sense on that particular device. Going in depth, there’s further segmentation within devices like device brand, viewport size, OS, browser, etc. which create a broader scope for testing.

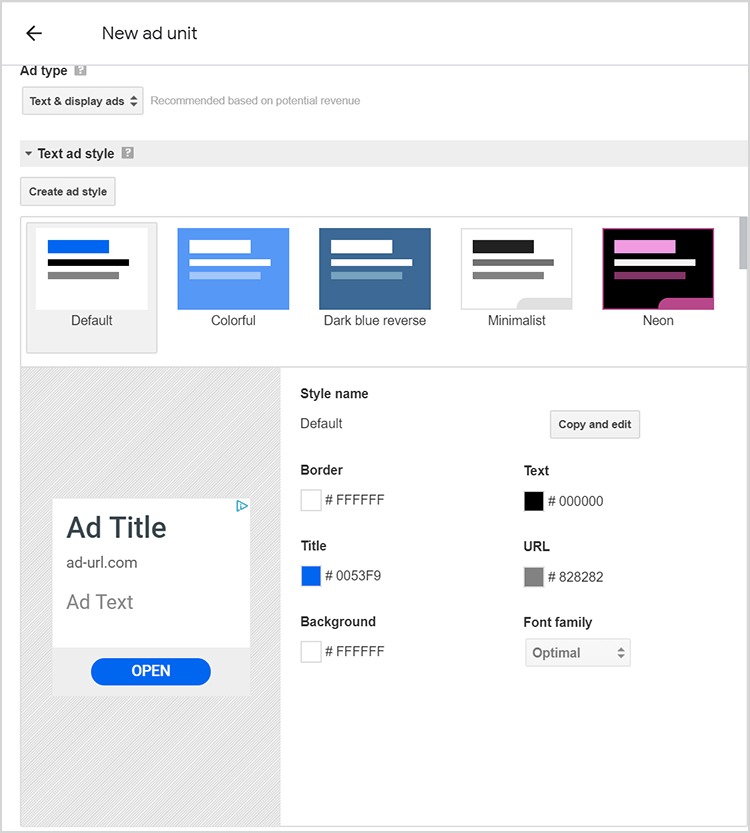

6. Ad Color Testing

What to test: Which text ad presentation gets better CTR.

How to test: Text ads are plain ads which blend in with the webpage and are known to achieve better click through rates compared to standard display ads; especially for content-rich websites/blogs. If you are an AdSense publisher, the dashboard gives you the option to choose and set the style for text ads, including the colors and font family used.

Text ads are known to be subtle. They are not flashy, but yet they are able to catch user attention due to its text-like appearance. To make the most of text ads, you can try to play with the color and presentation to see how well it works for your website. Don’t forget to test variants.

Some More Testing Considerations

Ads prove effective when everything works well, including design, content, layout, and UX. Here are some things publishers must consider to make their ad experience more successful.

1. Webpage Layout

You may have conducted tests on ad formats, sizes, placements, and layouts. But is your website ready to benefit from ad testing? Tools like Optimizely and VWO can help you run efficient A/B tests for the content on webpages, with the ability to help you create webpage variations.

This is similar to using ad layout testing tools to create ad layout variations. These tools automatically send traffic to the more effective variations, giving you the insight you need to make on-page improvements.

2. Content Type

We know that users come to a website in search of useful content. Hence, it won’t be new advice to say that ‘you need to optimize your page content.’ Here’s how the content and ad cycle runs一users come to the website, engage with the website while reading, ads load and receive impressions till the user is on the webpage, impressions (and clicks) add to your ad revenue.

You can test which content type works better for you, e.g., listicles, long-form content, resources/guide, or illustrations. The aim of testing out content is to achieve a better engagement rate, gauged via metrics like session duration or time on site. This method can also be effective in planning out your long term content strategy.

3. Ads by the UI

Sometimes, testing the website layout or content type may not be your immediate priority. In such cases, you can test ad type, size, and placement based on the existing UI. For example, if your website gets more engagement on its above-the-fold section because of videos, you can try using pre-roll or rich media ads in that section.

Similarly, if your website has infinite scroll enabled, a sticky ad would do wonders. Spend some time analyzing the characteristics of your website and find the right ad spots and ad types that you can experiment with; if testing the entire UI is not a possibility.

4. Browser/OS

According to Wikipedia, the worldwide market share in May 2019 for Chrome stood at 69.09%, followed by Firefox at 10.01% and Safari at 7.25%. Your users could be anywhere. Tools like Google Analytics provide you browser traffic split for your visitors telling you which browser is the most used by your users.

Using that data, you can conduct browser-wise ad testing to optimize performance. You can also check if you all your ads are rendering properly across all browsers. For instance, your website and ads may appear distorted on Chrome but may appear fine on Mozilla Firefox.

5. Ad Networks

Testing amongst ad networks or demand sources may not sound as creative as experimenting with ad sizes, formats, or placements. However, demand being one of the most important factors in revenue, it is wise to test which ad network helps generate the most revenue for your inventory.

Ad networks like AdSense, and many others, give you the option to work with multiple ad networks simultaneously. The network-wise yield reports give you a clear insights which ad network is worth investing in, and which is not.

Types of Testing

A/B testing is the first thing that comes to most people’s minds when they hear “testing.” But there is also multivariate testing which allows publishers to test between more than two variants. Here are the two types of testing along with their pros and cons:

A/B testing: Also called split testing, it allows you to create a main Control Unit and a Variation to test against it. An existing control unit may already have a statistical baseline. That data could be a benchmark against which you can run the variation and compare performance. In A/B testing, only one variation is tested, which gives a clearer understanding of how changes affect results.

Pro: Once you have some tests running, you can expand to multiple tests. For example, after control unit A (300×250) vs variation B (300×600), you can further compare variation B (300×600) with variation C (728×90), and so on.

Con: In a continuous process, manual A vs B testing can be slow and laborious. That is where automated A/B testing and optimization tools come to the rescue.

Multivariate testing: As the name suggests, multivariate testing allows you to experiment with more than two variants simultaneously. For example, withad size being the parameter in A/B testing, you may compare the performance of A vs B, and then C. In multivariate testing, you can test A vs B vs C with multiple parameters like ad size, placement, and type at the same time.

Pro: The ability to run multiple tests at the same time saves effort.

Con: With so many testing parameters, sometimes there can be too much data at disposal, which makes it difficult to draw conclusions.

Why Testing is Advantageous

Continuous testing expedites performance results and solves some common publisher problems. Here are some of them:

- Everything publishers do is to eventually increase ad revenue. Successful testing helps publishers grow CPM/CTR, which directly impacts the ad revenue.

- Comparison data between variations gives publishers the insights they need to make a better website and run better ads, thereby improving user experience.

- Testing within ad formats and ad placements helps combat banner blindness. It occurs when an existing ad layout becomes predictable and users consciously or unconsciously ignore ads, resulting in CPM and CTR decline for publishers.

- Testing gives access to actionable data and insights. This helps publishers take proven decisions instead of risking for a hit-and-trial method.

- Publishers seek better control over the ads they run and optimizations they do. Different types of ad testing give them proper monitoring and control over data and decisions.

- Manual testing may be effective but it’s also time-consuming. With growth in ad tech, publishers now have access to tools that automate the ad testing making it more efficient.

Bad Testing Habits to Avoid

Testing may be fun, effective, and potentially profitable. But overdoing it can backfire. By now, if you have already thought of a rough ad testing plan for yourself, here are some points you need to remember first:

- Keep calm and give testing the time it needs. It would be wrong to expect substantial data with two days of testing

- Don’t try to over-optimize. You might have N number of things to test. But, testing them all at once will make it harder to ascertain which test or parameter actually lead to success.

- Run tests, but not at the cost of user experience. It is fine if you go through a design overhaul or a complete revamp. But make sure you always keep user experience in mind.

- What worked then may not work now or later. Testing results are based on users’ responses to changes. But since traffic volume and user behaviour keeps changing, the ad test that may have worked wonders for you three months ago may not bring the same results now.

- Don’t run ad tests randomly and blindly. After reading a few articles (including this one), you may get a few ad testing ideas. But it is advisable that you first peep into your historical data to see if such tests have been performed before or not; if yes, what impact did they have.

- Run the right tests at the right time. Testing may sound fun, but you wouldn’t want to run a test at a time that it would backfire, e.g. if December is your highest revenue month, you would probably want to stick to your existing setup to avoid uncertainties.

Wrap Up

Google Ad Manager (DFP) allows test creatives and other ad testing functionalities that can help publishers understand their inventory better.

Ad testing has helped publishers witness an increase in page RPM and CPM by as much as 41% and 77.4% respectively. Though the results for different publishers depending on the use case always vary. Whether you choose to test manually or by using automation tools, regularly or occasionally, the conclusion is that testing should be the rule, not the exception.

For instance, without testing you might never find out that a small rectangle could bring you a better CTR than a leaderboard. Moreover, testing helps you define your long-term strategies as a publisher.

Similarly, if you have proven data that X ad type drives the highest attention and engagement for you, you can change your inventory accordingly to receive better bids from advertisers. These days, getting started with an ad testing plan is much easier. There are tools and ad ops professionals who do the legwork on your behalf, while you get to monitor the performance and focus on your growth.

Shubham is a digital marketer with rich experience working in the advertisement technology industry. He has vast experience in the programmatic industry, driving business strategy and scaling functions including but not limited to growth and marketing, Operations, process optimization, and Sales.