The one-size-fits-all approach to advertising never cuts it. Your ability to create user-friendly ads depends on how well you experiment with different formats, size, color combinations, and placements.

Your ads may be good enough to prevent policy violations in Google ad Manager, but if you want incremental growth, there’s always room for customization and experimentation.

Continue reading to find out how you can use Google’s new manual experiment feature to capture more revenue.

What is a Manual Experiment in Ad Manager?

Google has developed a new tool called ‘Manual Experiments’ to help publishers run their own tests or experiments in Google Ad Manager. With this new feature, publishers will be able to create and run experiments and analyze results within the existing GAM framework.

In other words, the manual experiment feature helps publishers maximize their revenue with manual opportunities for improving yield.

Google defines manual experiments as:

“An experiment you define based on your own criteria and schedule. The experiment runs on a percentage of your network’s actual traffic to test how applying those settings would impact revenue.”

Google

Publishers can run up to 100 active experiments within their existing ad manager network at any given time. Active experiments include trails that are running, completed, paused, or waiting for you to take action.

Types of Manual Experiments

As of now, Publishers can run three types of experiment on the ad manager:

- Category block experiments:

Publishers can run experiments to unblock categories from their existing protections and increase coverage.

Through category block experiments, publishers can analyze the impact of running specific ad types on currently blocked sites. - Native ad style experiments:

Publishers can experiment with two different visual elements and native ad styles to see which one will perform better.

Native ad style experiments can only compare native styles on the existing native placements. You cannot compare multi-format ads in the same placement. - Unified pricing rule experiments:

This manual experiment type allows publishers to test changes to unified pricing rules, which helps them understand their impact on the publisher’s exchange inventory.

In other words, publishers can review the impact of increasing or decreasing price floor on specific inventory.

Note: Google plans to roll out more experiment types in the future based on user feedback and demand.

Learn More:

1. How to Run Video Ads in Google Ad Manager (GAM)

2. Guide to Creating and Running Native Ads in Google Ad Manager

How Does It Work?

Manual experiment feature is built on the Ad manager’s existing Opportunities and Experiments tool, which uses Google’s experiment framework and machine learning to automatically add suggestions for publishers.

In the ad manager, you can check the list of available manual experiment types and review your active experiments by clicking on Home > Reporting > Experiments.

You can conduct manual experiments by selecting the experiment type and criteria of your choice. The tool asks you to define an experiment trial before running it for a specified period.

You can compare impression traffic allocated to the ‘control’ and ‘experiment’ groups to see which performed better during the experiment.

You can also run an experiment from an opportunity suggested by the Ad Manager.

Furthermore, the tool allows you to run additional trails if needed and apply experiment settings to your ad manager network.

How to run a manual experiment in GAM?

Follow these seven steps to run a manual experiment in Google Ad Manager:

- Sign in to your GAM account.

- On the dashboard, click reporting.

- Click Experiments > New experiments from the experiment type you prefer

- Enter the name of your experiment

- Enter a start and end date for the experiment trial

- Set the percent of impression traffic that you want to allocate to experiment

- Click save

Note: Each experiment needs to run for a minimum of seven days to get conclusive results. Publishers can set a trial to start immediately after saving the settings or schedule it for later.

The maximum duration for the experiment is 31 days. The trial will expire after this period. Once the trial ends, you can evaluate the results to take the following steps:

- Apply experiment result as an opportunity

- End the experiment and keep original settings

- Run another trail to find better opportunities

Learn More:

1. Key Features of the New Google Ad Manager Interface

2. How to Generate Reports in Google Ad Manager

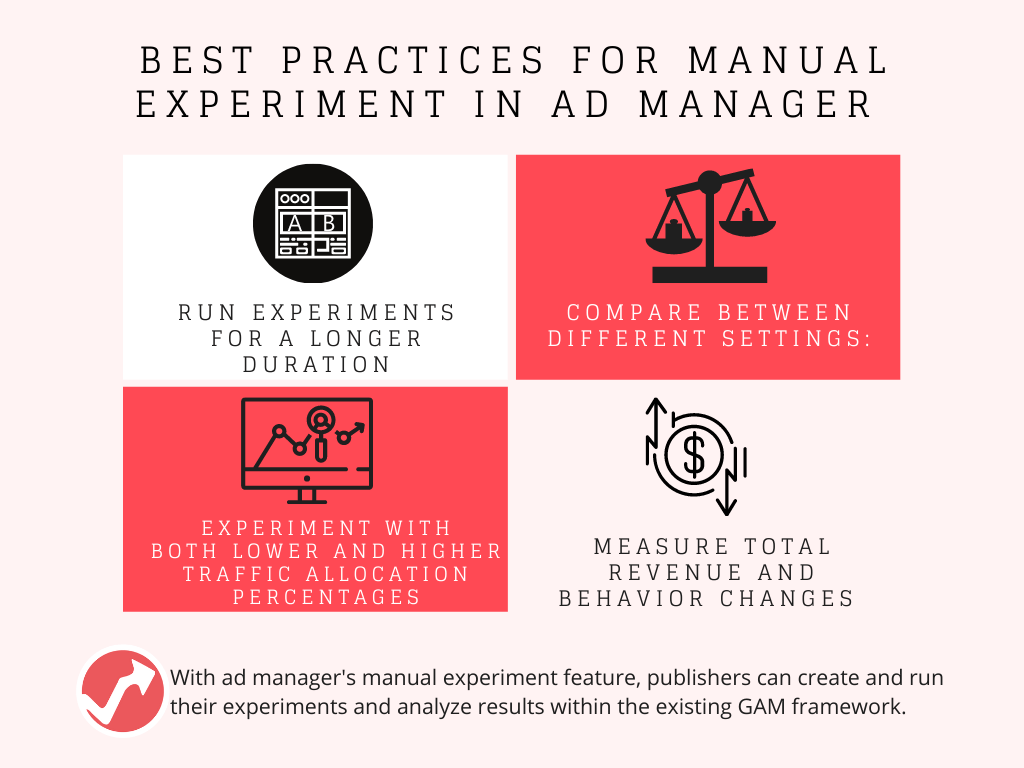

Manual experiment best practices:

Google recommends the following practices for publishers who want to get the most out of manual experiments and capture their potential revenue on market behavior:

1. Run experiments for a longer duration

It’s important to run these experiments over a long period to understand audience behavior and study the impact of changes you’ve made.

As mentioned above, the maximum duration for running a trial is 31 days, and the minimum is 7. Ideally, publishers need 2-3 weeks to get a grasp of behavior changes.

2. Comparison between different settings:

You need to make a thorough comparison of both settings to get an understanding of audience behavior.

Make sure to compare impression traffic allocated to the ‘control’ and ‘experiment’ groups to see which performed better during the experiment.

3. Consider total revenue:

In addition to comparing behavior changes in the experiment and control group, you should look at the numbers too because experiment settings are likely to impact your total revenue.

You should verify whether the expected revenue in the experiment group is in line with your initial expectations against your network as a whole.

4. Experiment more often

Experiments are very likely to encourage behavior change amongst your target audience. Google recommends publishers run more trials to get a deeper understanding of audience behavior.

It’s better to experiment with both lower and higher traffic allocation percentages. While the lower traffic allocation might not reflect big behavioral changes, they’re also less risky (if the trial has a negative impact).

We suggest allocating traffic percentages from lower to higher. Once you find your trials performing at an acceptable level, you can start another test with the same settings at a higher traffic allocation to measure the effects.

Learn More:

1. How to Use Google Ad Manager with AdSense and Ad Exchange

2. How does Open Bidding Work in Google Ad Manager?

Publishers have started experimenting:

Publishers like the Atlantic, UOL, and Storer have already started using manual experiments in Google Ad Manager, and they see success.

In fact, publishers who used manual experiments during the beta period achieved a 6.5% revenue increase.

Similarly, in 2020, publishers who used Opportunites and Experiments tools accepted over 2000 opportunities and gained 3.5% more revenue.

“We’ve been able to seamlessly run experiments using Manual Experiments – giving us insight into the impact of different floor prices and helping us determine how to effectively price floors to earn the most revenue. We’re excited to see how this feature evolves.”

– Ryan McConaghy, The Atlantic.

The new addition to GAM is helping publishers test pricing rules as well:

“With Manual Experiments, we now have an important tool to speed up and simplify the testing and optimization process in our unified price rules decisions.”

– Fabricio Gomes, UOL.

In Closing:

We’ve picked on manual experiments for a reason – in today’s dynamic environment, the best way to improve revenues is to test and experiment.

Ads often become a victim of banner blindness and poor UX. It’s time to scrap your traditional ads.

We encourage you to keep A/B testing individual elements of your ads to see what clicks with your audiences.

Ready to start manual experiments? Get a demo of AdPushup to find out how to use its built-in ad optimization and experimentation features for more ad revenue.

FAQs

In custom experiments, you can test Smart Bidding, keyword match types, landing pages, audiences, and ad groups. Custom experiments are available for Search and Display campaigns. It is now possible to create an experiment without first creating a draft.

Using a campaign experiment, you can compare the performance of your draft to that of the original campaign. For a specified period of time, experiments use a portion of the original campaign’s traffic and budget.

Google Ad Manager estimates the value of campaigns based on the CPM (cost per thousand impressions) you specify. Amounts entered in the “Value CPM” field are used in revenue calculations.

Shubham is a digital marketer with rich experience working in the advertisement technology industry. He has vast experience in the programmatic industry, driving business strategy and scaling functions including but not limited to growth and marketing, Operations, process optimization, and Sales.